Key Insights

- Data breaches now cost millions per incident, with global damages reaching trillions each year. This steady increase shows that traditional protection methods are no longer enough.

- Tokenization replaces sensitive data with tokens, which limits where real data exists. Fewer exposure points mean lower risk and easier compliance management.

- The success of a tokenization platform depends on design decisions and technology stack. The right setup improves performance, security, and long-term reliability.

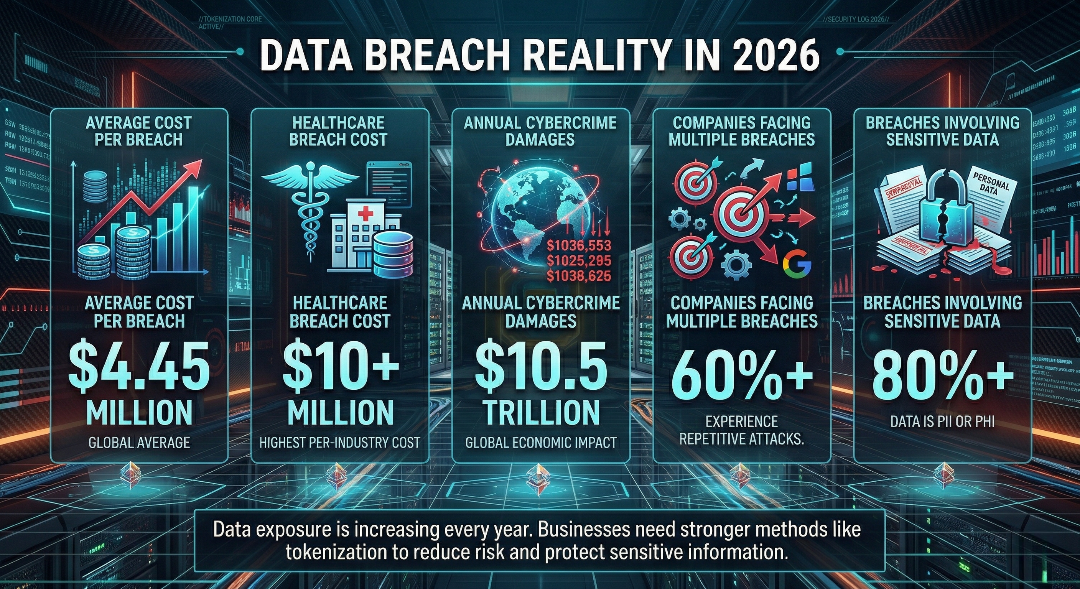

Data breaches now occur at a steady pace across industries. Recent reports show that the average cost of a breach stands at around $4.45 million per incident. In sectors like healthcare, this figure often crosses $10 million. At the same time, global cybercrime damages are expected to reach $10.5 trillion annually by 2026.

These numbers show a clear shift. Data loss is no longer occasional. It is a regular business risk that affects companies of all sizes.

A single breach can damage years of trust. Customers stop sharing their information, and business partners begin to question reliability. Attackers do not just steal data. They resell it and reuse it, which increases long-term impact.

Why does this matter for everyday systems? Many companies still store sensitive data across multiple systems. Each location creates another point of risk. Tokenization reduces this exposure by removing real data from daily operations and limiting where it exists.

What Is a Data Tokenization Platform?

Core Concept Explained in Simple Terms

A data tokenization platform replaces sensitive data with a token. The original data is stored in a secure vault. Systems use the token for processing, and only authorized requests can retrieve the real data. Think of a movie ticket. The ticket gives you access, but it does not reveal your identity or payment details. In the same way, a token represents data without exposing it. This setup keeps sensitive data away from regular systems. It reduces risk during storage, transfer, and processing.

Types of Tokenization (Format-Preserving vs Non-Preserving)

Different systems need different token formats. The choice depends on how the data is used after tokenization.

- Format-preserving tokenization

Tokens follow the same structure as the original data. A credit card number stays in a numeric format. This helps systems that expect a fixed pattern. - Non-preserving tokenization

Tokens do not match the original format. They may include random characters or different lengths. This offers higher protection since patterns are removed.

Many platforms use both methods. They select the format based on system compatibility and security needs.

Key Components of a Tokenization System

A tokenization platform includes multiple parts that work together. Each part handles a specific task.

- Token vault

Stores original sensitive data in a secure location. - Tokenization engine

Generates tokens and manages the mapping between tokens and real data. - Access control system

Defines who can view or retrieve sensitive data. - APIs and integration layer

Allows applications to request tokens or retrieve data without direct exposure. - Monitoring and logging tools

Track access and record activity for audits and security checks.

Each component supports data protection without interrupting normal system operations.

Who Needs Tokenization Platforms in 2026?

Fintech and Payment Gateways

Financial systems process thousands of transactions every minute. Each transaction includes card numbers, account details, and user identities. This makes them a frequent target for attacks.

Tokenization replaces card details with tokens during transactions. Even if attackers intercept the data, they get useless values. Payment providers also reduce the number of systems that store real card data. This lowers compliance effort and reduces audit pressure.

Healthcare and Sensitive Patient Data

Hospitals and clinics store patient records that include medical history and insurance details. A single breach can expose thousands of records at once. This affects patient trust and may delay treatment processes.

Tokenization keeps sensitive records out of everyday systems. Medical staff can access required details through secure requests. The actual data remains in a protected vault. This setup supports privacy rules and keeps workflows stable.

E-commerce and Customer Data Protection

Online stores collect names, addresses, and payment details from customers. Daily transactions increase the volume of stored data. More data means more points of risk.

Tokenization replaces customer details during storage and processing. Systems work with tokens instead of real data. Businesses still track orders and user activity without storing sensitive information across multiple services.

SaaS Platforms Handling PII

Software platforms store user data across servers, regions, and services. Managing personal data becomes complex as the user base grows. Each system that stores real data adds another point of risk.

Tokenization limits where actual data is stored. Applications continue to function using tokens. This reduces the chances of data leaks and simplifies compliance across different regions.

Enterprises Moving to Zero-Trust Architectures

Many companies now follow a zero-trust model. Every request must be verified, even from internal systems. This model reduces blind trust but increases the need to limit data exposure.

Tokenization fits well in this setup. Users and systems interact with tokens instead of real data. Even if access is granted, the actual data remains protected. This reduces risk across internal and external systems.

Key Features Every High-Performing Tokenization Platform Must Have

Vault-Based vs Vaultless Tokenization

A tokenization platform starts with a choice. Should it store original data in a vault or avoid storage completely? Vault-based systems keep sensitive data in a secure database and map it to tokens. This allows controlled access and easier retrieval. It also requires strict protection for the vault. Vaultless systems use algorithms to create tokens without storing original data in one place. This reduces storage risk. It can add complexity when systems need to retrieve original values. The right choice depends on how often the system needs access to real data.

High-Speed Token Generation and Retrieval

Tokenization sits between user actions and system responses. Delays can slow down payments, logins, or API calls. A reliable platform processes thousands of requests each second. Token creation and lookup must happen in milliseconds. This keeps applications responsive and avoids user frustration. Slow systems lead to drop-offs. Fast systems keep users engaged.

Role-Based Access Control (RBAC)

Not every user needs full access to sensitive data. Role-based access control defines who can see or retrieve information. For example, a support team member may view masked data. A compliance officer may access full details. Each role has specific permissions. This reduces unnecessary exposure and keeps access limited to those who need it.

API-First Architecture for Easy Integration

Modern applications rely on APIs for communication. A tokenization platform must offer clear and consistent APIs. Developers use these APIs to generate tokens, retrieve data, and validate requests. This allows easy integration with existing systems. It reduces development time and keeps workflows consistent across services.

Audit Logging and Monitoring Capabilities

Every access to sensitive data must be recorded. Logs capture who accessed data, when it happened, and what actions were taken. Monitoring systems track unusual patterns. For example, repeated access attempts or sudden spikes in requests. Alerts notify teams when something looks suspicious. This helps teams respond quickly and supports compliance checks.

Multi-Region Deployment for Global Compliance

Data laws differ across countries. Some regions require data to remain within local boundaries. A tokenization platform with multi-region support stores and processes data in specific locations. This helps companies meet local rules without changing system design. It also improves performance by keeping services closer to users in different regions.

Ready to build a secure data tokenization platform for your business?

From planning to deployment, get a solution that fits your business needs and keeps your data protected at every step.

Step-by-Step: How to Build a Data Tokenization Platform from Scratch

Define Your Data Protection Scope

Start with a clear list of data that needs protection. This includes card details, personal records, or internal business data. Not every dataset needs the same level of security. Map where this data lives and how it moves. For example, check databases, APIs, and third-party services. A simple flow diagram helps here. This step sets the direction for design and reduces guesswork later.

Choose Tokenization Model (Vault or Vaultless)

Once the data scope is clear, select the tokenization model. Vault-based systems store original data in a secure location. This works well when systems need to retrieve real values often. Vaultless systems avoid storing original data in one place. They rely on algorithms to generate tokens. This reduces storage risk but can limit retrieval options. This choice affects system design, speed, and maintenance effort.

Design Secure Token Mapping Mechanism

Token mapping links tokens with original data. This layer must stay protected at all times. For vault-based systems, secure the database with strict access control. Limit who can read or write data. Use encryption for stored values. For vaultless systems, focus on strong algorithms. Tokens should not reveal patterns or allow reverse calculation. A weak mapping system can expose sensitive data.

Build Scalable APIs for Token Access

APIs act as the bridge between applications and the token system. They handle token creation, retrieval, and validation. Keep APIs simple and consistent. Use clear request and response formats. Developers should understand them quickly without confusion. Fast and stable APIs keep the system reliable under heavy traffic.

Implement Strong Authentication & Authorization

Every request must pass identity checks. Authentication confirms who is making the request. Authorization decides what they can access. Use multi-factor authentication for added protection. Define roles and permissions clearly. This limits access to sensitive data and reduces misuse.

Add Logging, Monitoring, and Alerts

Systems need constant visibility. Logs record every action, including data access and token requests. Monitoring tools track system health and unusual patterns. For example, a sudden spike in requests may signal a problem. Alerts notify teams in real time so they can act quickly.Early detection prevents larger issues.

Test for Performance, Security, and Failover

Testing confirms that the system works under real conditions. Run load tests to check how it performs with high traffic. Conduct security tests to find weak points. Penetration testing helps identify gaps before attackers do. Test failure scenarios as well. If one server fails, another should take over without downtime. A reliable system handles failures without major disruption.

Technology Stack for Tokenization Platforms in 2026

Backend Technologies (High Performance & Secure)

Node.js vs Java vs Go for token services

Each language serves a different need. Node.js handles many concurrent requests, which suits API-heavy systems. Java offers stability and long-term support, often used in large enterprises. Go uses fewer resources and performs well under high load. The choice depends on team expertise and system demands.

Microservices vs Monolith: What Works Best

A microservices setup divides the platform into smaller services. Each service handles a specific task such as token generation or validation. This allows easier updates and better fault isolation. A monolith keeps all functions in one system. It is simpler to build and manage at the start. As systems grow, microservices offer more flexibility.

Databases for Token Vaults

Relational vs NoSQL for token mapping

Relational databases store structured data and maintain strict consistency. This works well for systems that need accurate mapping. NoSQL databases handle large volumes and flexible data structures. They scale easily across distributed systems. Some platforms combine both to balance consistency and performance.

Encryption-at-rest strategies

Stored data must remain protected at all times. Encryption at rest secures data inside databases. Even if storage is accessed without permission, the data stays unreadable without keys.

Cloud Infrastructure Choices

AWS, Azure, GCP comparison

Cloud providers offer similar services with different strengths. AWS has wide global coverage and many service options. Azure works well with enterprise systems, especially those using Microsoft tools. GCP focuses on data processing and analytics. The choice depends on existing systems and budget.

Multi-cloud vs single-cloud setups

A single-cloud setup is easier to manage and deploy. It reduces operational complexity. A multi-cloud setup spreads workloads across providers. This reduces dependency on one vendor and improves system resilience. It requires careful planning to manage complexity.

API and Integration Layer

REST vs GraphQL for token access

REST APIs follow a simple structure and are widely used. They handle standard operations like token creation and retrieval. GraphQL allows clients to request only the data they need. This reduces extra data transfer and improves efficiency for complex queries.

API gateways and rate limiting

An API gateway manages incoming requests. It handles routing, security checks, and access control. Rate limiting restricts how many requests a user or system can send within a time frame. This prevents overload and protects against abuse.

Security Technologies

HSM (Hardware Security Modules)

HSM devices store cryptographic keys in a secure environment. They keep keys separate from regular systems. This reduces the risk of key exposure during operations.

Key Management Systems (KMS)

A key management system handles key creation, storage, and rotation. It keeps keys organized and reduces human error in handling them. HSM and KMS work together to protect sensitive operations and maintain data security across the platform.

Architecture Design Patterns That Scale

Centralized Token Vault Architecture

A centralized vault stores all sensitive data in one secure location. The system generates tokens and maps them to real data inside this vault. This setup gives full control over access and data handling. Teams find it easier to manage audits and track data usage in this model. All records stay in one place, which simplifies monitoring. There is a trade-off. A single vault becomes a high-value target. Strong security controls and strict access rules are necessary to protect it.

Distributed Tokenization Systems

Distributed systems spread token services across multiple regions or nodes. Each location can handle token requests locally. This reduces delay for users in different parts of the world. It also reduces the risk of a single system failure. If one node goes down, others continue to operate. This setup requires careful coordination. Data consistency and synchronization need constant attention to avoid mismatches.

Stateless Tokenization Models

Stateless models avoid storing token mappings in a database. The system uses algorithms to generate tokens that can be verified later. This reduces storage needs and removes the risk tied to a central vault. It also improves speed in many cases. This model works well for systems that do not need frequent access to original data. It may not suit cases that require detailed tracking or data recovery.

Event-Driven Tokenization Pipelines

Event-driven systems process data as it flows through the system. Each action triggers a step. For example, when a user submits data, the system tokenizes it before storage. This keeps sensitive data from spreading across services. Each stage handles only tokenized values. It fits well with real-time systems that process large volumes of data. Messaging systems and queues often support this design.

High Availability and Disaster Recovery Design

System downtime can affect business operations within minutes. High availability setups keep services running through multiple servers and load balancing. If one system fails, another takes over without delay. This reduces service interruptions. Disaster recovery plans focus on restoring systems after major failures. Regular backups and data replication reduce loss. Teams should test recovery plans often to confirm they work under real conditions.

How Much Does It Cost to Create a Data Tokenization Platform?

Building a data tokenization platform is not a fixed-cost project. The total budget depends on features, system complexity, team size, and compliance needs. A basic platform may start from around $40,000, while a full-scale enterprise system can cross $250,000.

Costs increase with security layers, performance requirements, and global deployment needs. Instead of looking at one total number, it helps to break the platform into individual components. This gives a clearer picture of where time and money go.

Below is a detailed breakdown of features, development time, and estimated cost ranges.

| Feature | Description | Duration (Approx) | Cost Range (USD) |

|---|---|---|---|

| Tokenization Engine | Generates tokens and manages mapping between tokens and original data | 3–5 weeks | $8,000 – $20,000 |

| Token Vault (Secure Storage) | Stores sensitive data in an encrypted and access-controlled environment | 4–6 weeks | $10,000 – $25,000 |

| Vaultless Tokenization Logic | Algorithm-based token creation without storing original data centrally | 3–4 weeks | $7,000 – $18,000 |

| API Development | APIs for token generation, retrieval, validation, and integration | 3–5 weeks | $8,000 – $20,000 |

| Authentication & Authorization | User identity checks, role-based access, and permission control | 2–4 weeks | $5,000 – $15,000 |

| Encryption & Key Management | Data encryption and secure key handling using KMS or similar tools | 2–3 weeks | $4,000 – $12,000 |

| Audit Logging & Monitoring | Tracks system activity and detects unusual access patterns | 2–3 weeks | $4,000 – $10,000 |

| Admin Dashboard | Interface for managing tokens, users, and system settings | 3–4 weeks | $6,000 – $15,000 |

| Multi-Region Deployment Setup | Infrastructure setup across regions for compliance and performance | 3–5 weeks | $8,000 – $20,000 |

| API Gateway & Rate Limiting | Controls API traffic and prevents misuse or overload | 2–3 weeks | $4,000 – $10,000 |

| Performance Optimization | Improves response time and handles high request volumes | 2–4 weeks | $5,000 – $12,000 |

| Security Testing & Pen Testing | Identifies vulnerabilities through controlled testing | 2–3 weeks | $5,000 – $15,000 |

| Failover & Disaster Recovery Setup | Backup systems and recovery plans for downtime scenarios | 2–4 weeks | $6,000 – $15,000 |

Real-World Use Cases That Drive Business Value

Payment Tokenization for Secure Transactions

Payment systems handle card data during every transaction. Tokenization replaces card numbers with tokens before storage or transfer. This reduces exposure during payment processing. Even if attackers intercept data, they cannot use the token outside the system. It also reduces the number of systems that store real card data. This helps companies meet payment security rules with less effort.

Tokenizing Customer Data for Personalization

Businesses rely on customer data to improve user experience. Storing real data across multiple systems increases risk. Tokenization replaces personal details with tokens. Systems still track user behavior using these tokens. This allows companies to personalize services without exposing private data. Customer privacy remains protected during analysis.

Protecting API Data Exchanges

APIs connect different systems and handle constant data exchange. Each request carries a risk if sensitive data is exposed. Tokenization keeps real data out of API responses. Systems share tokens instead of actual values. If an API is compromised, attackers cannot extract meaningful data. This adds an extra layer of protection during communication.

Secure Data Sharing Across Partners

Businesses often share data with partners and vendors. Sharing real data increases dependency and risk. Tokenization allows companies to share tokens instead. Partners can process data without seeing the original values. This limits exposure and keeps control within the organization.

Tokenization in AI and Data Analytics Pipelines

Data analysis requires large datasets. Using raw sensitive data in these systems increases risk. Tokenization replaces sensitive fields before data enters analytics pipelines. Teams can still analyze trends and patterns using tokens. This protects personal information while allowing data-driven decisions.

Conclusion

Data protection now sits at the center of every digital system. Businesses handle large volumes of sensitive data each day, and even a small gap can lead to serious damage. Tokenization reduces this risk by limiting where real data exists and how it is accessed. It supports compliance, improves system safety, and keeps operations steady across industries. From payments to healthcare to AI systems, its role keeps growing in 2026. For organizations planning to adopt this model, working with an experienced provider makes a difference. Blockchain App Factory provides data tokenization platform development with a focus on security, performance, and practical implementation for real-world use cases.